Instant

Intelligence

AI that understands while you speak — not after. Streaming intelligence across voice, text, and visual signals.

Self-Hosted Voice AI Gateway

› Quick Start

# Self-host the full stack. Works on macOS, Linux, and WSL.

$ curl -fsSL https://whissle.ai/install.sh | bash

Pulls the Docker image, configures API keys, and starts with Docker Compose.

Whissle bridges the gap between discriminative and generative AI.

A modular intelligence layer that converts any stream — audio, text, or video — into transcripts, emotion, intent, and actionable insights. Instantly, privately, at scale.

Real-time natural language tokens

Traditional ASR systems transcribe quickly but miss deeper meaning. Context, emotion, and intent disappear the moment words are captured.

Multi-modal Intelligence

Multi-modal LLMs offer richer insights but can't keep up in real time. You shouldn't have to choose between depth and speed.

Real-time natural language tokens

Traditional systems, like LLM and ASR, transcribe quickly but miss deeper meaning. Context, emotion, and intent disappear the moment words are captured or LLMs not work in streaming on the text.

Multi-modal Intelligence

Multi-modal LLMs offer richer insights but can't keep up in real time. You shouldn't have to choose between depth and speed.

Whissle bridges the gap between discriminative and generative AI.

A modular intelligence layer that converts any stream — audio, text, or video — into transcripts, emotion, intent, and actionable insights. Instantly, privately, at scale.

Solutions

Built for your industry

One streaming intelligence model — META-1 with 5,500+ action tokens across 11 domains — powering every solution through a single ASR pass. Same architecture, different vocabularies.

Contact Centers & Collections

AI voice agents that resolve calls in 30 seconds instead of 2 minutes. Real-time intent detection, emotion-aware routing, and code-mixed language support for multilingual call centers.

Learn moreSales Intelligence

AI coaching scorecards, emotion timelines, and speech pattern analysis for every sales call. Know what your best reps do differently — backed by per-utterance data, not opinions.

Learn moreBehavioral AI & Psychotherapy

Automated behavioral coding for therapy sessions — reflections, questions, change talk, empathy — extracted in real-time from speech. MISC-aligned fidelity scoring during the session, not after.

Learn more3D Avatar Agents

Interactive AI avatars for education, training, and behavioral assessment. Students practice with lifelike 3D agents that evaluate fluency, empathy, and communication skills in real-time.

Learn moreSmart Infrastructure

Voice-first queries over surveillance, ANPR, and IoT databases. Compliance monitoring, staff assessment, and fatigue detection — all through natural language on-site.

Learn moreLanguage Assessment

Fluency, vocabulary, grammar, pronunciation, and tonal scoring from speech. Custom models for regional languages and dialects. Full technology transfer included.

Learn moreEnterprise Search

Voice-native search over existing indices. Structured filters — entities, date ranges, intent — extracted as users speak. Drop-in with Solr, Elastic, or custom.

Learn moreEntertainment Discovery

Voice-first recommendations for music, movies, books, and food — personalized by age, emotion, and taste. One ambient interface. Coming soon.

Learn moreText, audio and video streamed IN, structured intelligence OUT.

META-1 extracts transcription, emotion, intent, entities, age, and gender by understanding in-between words. No accumulated errors, no added latency. Audio & text today, video tomorrow.

18,189 total vocabulary tokens — 9,919 metadata + 8,270 speech tokens decoded in a single CTC beam search. Discriminative AI: grounded outputs, zero hallucination.

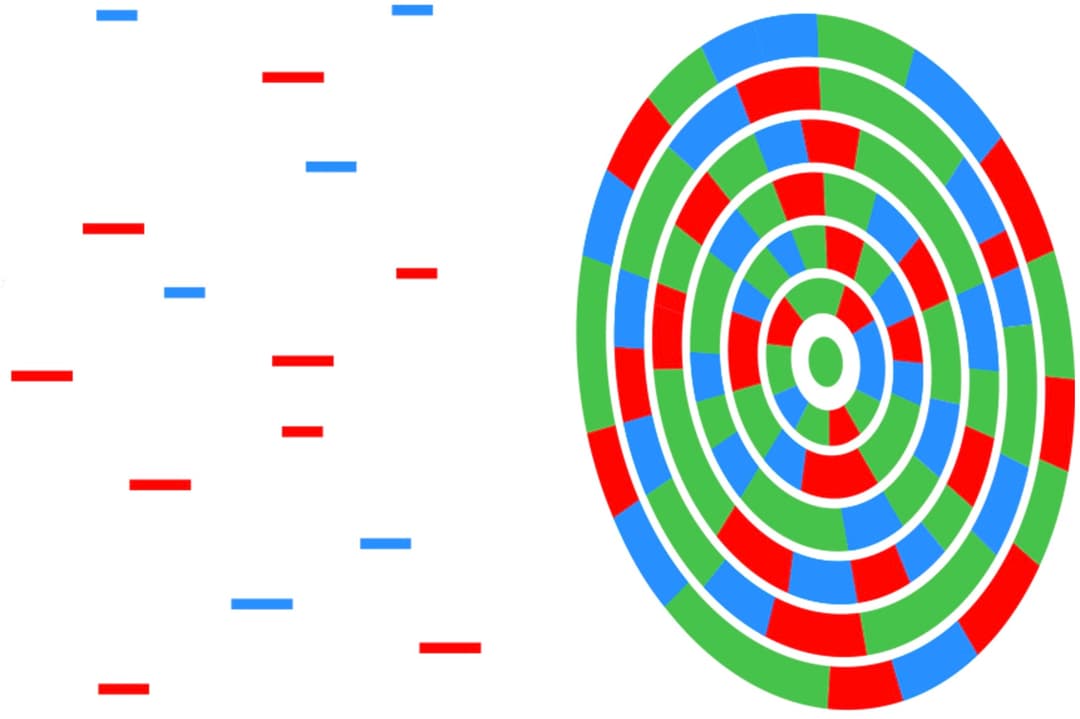

Stream2Action Architecture

Any input stream → META-1 → Structured JSON → Actions

Get Whissle

Self-host on-prem, use our cloud API, or explore the research — your data, your choice, your intelligence.

Whissle Gateway

Full voice AI stack — ASR, LLM, TTS, diarization — on a single GPU. Self-hosted Docker for on-prem. Cloud API returning soon.

Whissle Browser

AI browser with ambient voice intelligence, adaptive theming, inline annotations, and Lulu built in. Free download for macOS.

Agents Studio

Build, test, and deploy AI voice agents with Lulu. Cloud API, voice cloning, analytics dashboard — coming soon.

Developer Docs

API reference, streaming examples, and deployment guides for ASR, TTS, LLM, and voice agents.

Meta-1 Foundation Model

Multi-modal discriminative model — emotion, intent, age, gender, and entity detection from any input stream in a single forward pass.